I keep telling myself that I am not an Apple “fan boy”. If it were not for work, I would not choose to use a MacBook or MacOS. However, I do admit to being a longtime iPhone user, having some Apple TVs, iPads, and two Apple Watches. But I write this blog post from a Linux machine using an open-source browser. My most recent acquisition of the Apple mind-washing kind are my AirPod Pros. I swear to goodness, if used correctly, your Airpods make you healthier and smarter. If they could only make me better looking, taller, and add some hair. But I digress…

How AirPods make you smarter and healthier

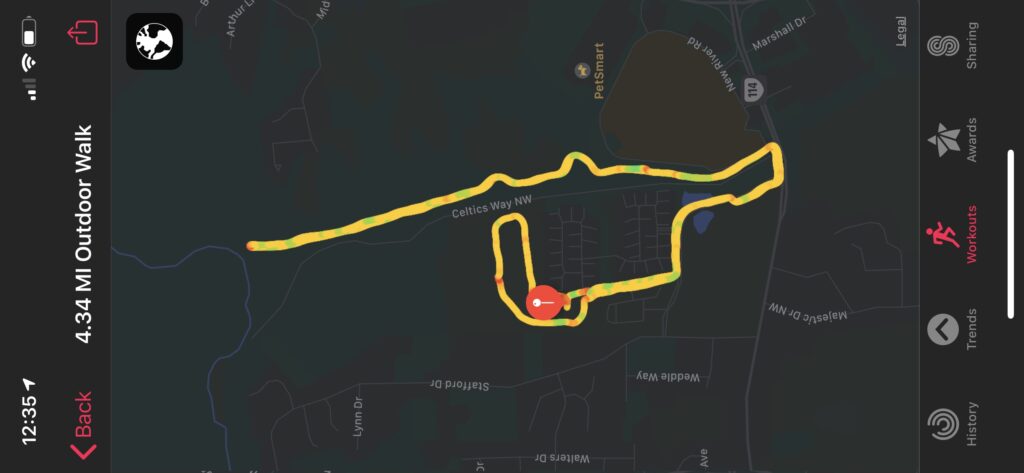

How do the AirPods accomplish this amazing task of making one healthier and smarter at the same time? Well, it does require some cooperation on the wearer’s part. One of my blog posts on cahallracing.com was about how my Apple Watch helped me start running. I published a similar article on Medium.com to gain some experience on that platform as well. Those posts outline how my watch helped me track my walking and running workouts with many metrics. This was classic “gamification” that helped encourage me to keep moving faster and more often – even when I did not feel like it.

The AirPods add in another dimension – audio books. I have often wished I read more books: business books, the “Classics”, documentary books – but I hate taking the time to sit down and read. It is just not me. For my health and personal performance while racing cars, I have committed to walking an average of 10k steps per day each week, month and year. The downside, even with the watch’s gamification, is that it can be tearfully boring. Before my AirPods, I usually just thought and solved problems in my head while I walked. I could hear my dad’s voice encouraging me to, “Use your head and think while it is still free – before they figure out how to tax it”.

Do AirPods help you exercise or does exercise help use the AirPods?

Problem solving is great – but it does not require walking 10k steps to do it and I often am doing that in meetings and at my computer. However, as previously mentioned, I had always wanted to read more books, but was not willing to sit on a couch and do that for hours. I spend almost an hour each day (some days much more) walking.

Now I am walking and “reading” (listening) at the same time. Seriously great books. The most awesome attribute of this is that my two goals are mutually reinforcing. Some days I really want to get to the next chapter of the book and it helps me get my gear on to go for my walk or run. Other days, when I want to walk, I realize I have some interesting information to consume. It is a more powerful motivator to getting me moving and driving to reach my daily, weekly, and monthly goals than just my watch alone.

More is better (Health / Knowledge)

I have “read” more books in 2020 than I think I have read in all of the years previously combined (I don’t count college books that were required). This is true and 2020 is only half complete. My largest regret is not discovering Audible and getting on that subscription earlier. I am going to walk anyway, or is it that I want to finish that book anyway? It really does not matter which, as both are happening more easily and frequently now.

So here I go complimenting Apple for making me healthier and smarter. Android also supports Audible and there are a multitude of wireless earbud vendors. I like the amazing integration of wearing my watch, listening to Audible while my phone/watch track my steps and distance metrics. I know it is only a click on my watch face to take a work call if needed.

May you live in interesting times.